What if Computers Could Think Like Brains, Down to the Hardware Level?

2026-01-28 | By Rose Destor

Environmental RAM / Volatile Memory Resistors

Introduction

The human brain is a marvel of nature, especially when it comes to processing and memory. Unlike computers, it doesn’t rely on GPUs, AI accelerators, RAM, or hard drives, yet it internalizes and stores a lifetime of experiences, emotions, and knowledge. How does our brain do that? Could we develop our computers to mimic the functionalities of our brain?

The Von Neumann Architecture and its limitations

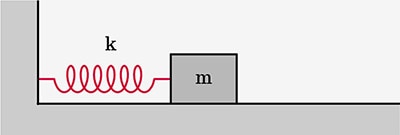

The Von Neumann Architecture [1].

The Von Neumann Architecture [1].

Most modern computers are built on the Von Neumann Architecture, a design proposed in the 1940s. It consists of a central processing unit (CPU) and memory, connected by a data bus. Here’s how it works: data is stored in the memory (DRAM or NAND flash), sent to the CPU via the data bus for processing, then written back to the memory if needed.

While this design has powered decades of innovation, it comes with a major flaw known as the Von Neumann bottleneck. The constant back and forth of data between memory and CPU consumes 200 times more energy than the calculations themselves. [1] This inefficiency was first identified in 1977 and remains a challenge today.

John von Neumann with the IAS Computer. [2, 3]

John von Neumann with the IAS Computer. [2, 3]

As the computing demands grow, especially with the rise of deep learning and transformer models like ChatGPT and Veo, this bottleneck becomes even more problematic. Early computer programs were rule-based or statistical [4] and often still solvable by hand, like the Markov Chain. [13] Meanwhile, models used today are exponentially more complex, requiring massive computational resources.

A metric ton of CO2 gas in respective. [5]

A metric ton of CO2 gas in respective. [5]

To meet these demands, we’ve turned to graphics processing units (GPUs). Originally designed for rendering graphics, GPUs are now the backbone of AI, as they can process many tasks in parallel. But even with GPUs, the environmental cost is staggering. Just training GPT-4 for 90 days using 25,000 A100 GPUs emitted 522 tonnes of CO₂, and daily inference alone emits 8.3 tonnes. [1]

AI accelerators (e.g., Google TPU, Intel Gaudi2, Apple Neural Engine) offer plug-and-play upgrades to boost the performance of traditional GPUs by 10-20 times without replacing entire systems. While they improve efficiency, the overall carbon footprint of AI remains high. At most, server-level AI-accelerated GPUs, including P100 and V100, demonstrate lower energy consumption. But others, like Radeon VII and Titan X (Pascal), require greater work in optimization due to little or no energy savings. [6]

Meanwhile, the human brain runs on roughly 20W of power, which is less electricity than is required to power a lightbulb [12]. While it has flaws like forgetting our keys or our homework submission date, our brains can instantly recognize patterns and images, remember key moments in our lives even from five years ago, do math, and produce art. So, if man-made systems are hitting their limits, maybe it’s time to ask if a biologically inspired computer would be better?

The Neuromorphic architecture

An outline and comparison of our biological neurons and crossbar arrays of neuromorphic chips. [7]

An outline and comparison of our biological neurons and crossbar arrays of neuromorphic chips. [7]

Enter neuromorphic computing, a concept proposed by Carver Mead in the late 1980s. It mimics the brain’s computing style to overcome the limitation of the Von Neumann bottleneck.

Breaking down the words: “neuro” refers to the nervous system, and “morphic” means shape and form. So, “neuromorphic” literally means “having the shape of the nerve.” In computing, it refers to systems that are inspired by the brain’s structure and behavior, both in hardware and software.

Importantly, neuromorphic computing isn’t about replicating the brain. Instead, it borrows ideas from how the brain handles learning, decision-making, and memory and applies them to practical computing tasks.

The memristor: Brain-inspired hardware

On the hardware side, neuromorphic systems use a special device called the memristor, short for memory resistor. Unlike traditional computers, where memory and processing are separate, memristors can store and process data in the same location, just like neurons in the brain.

A closer look at each biological neuron and a memory cell in memristors. [7]

A closer look at each biological neuron and a memory cell in memristors. [7]

At a closer look, a biological neuron can be coined as a memory cell in memristors. The memory cell’s conductive state (i.e., its resistance) usually represents the synaptic weights (i.e., the data it’s required to store). The response to current and external voltage stimuli is in accordance with Ohm’s law (i.e., with higher resistance, the response current decreases, making the memory cell in its “off” state). [7]

The four main memristive switching mechanisms. [8]

The four main memristive switching mechanisms. [8]

A memory cell is built like a sandwich. There’s the top electrode, the switching layer, and the bottom electrode. In the switching layer, there are four main ways the resistance changes: (i) filament formation, (ii) heat, (iii) polarised-electric fields, and (iv) magnetic fields. These mechanisms activate the memory cell (SET) or deactivate it (RESET) like a neuron activating or deactivating depending on the task at hand. [8]

Layout and circuit diagram of an MRAM crossbar array. [9]

Layout and circuit diagram of an MRAM crossbar array. [9]

Most AI models rely heavily on matrix calculations, so memory cells are arranged in crossbar arrays, allowing for massively parallel data access, and sometimes, even stacked into 3D crossbar arrays for scalability. [1]

The industry

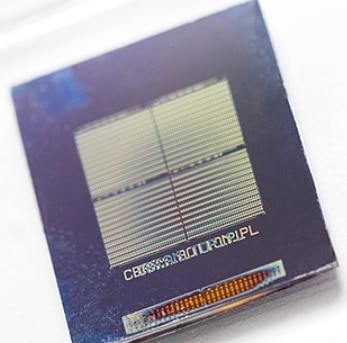

Intel’s Loihi 1, one of the most well-known neuromorphic chips. It features 128 million synapses and consumes less than 1.5W of power during standard use. [11]

Intel’s Loihi 1, one of the most well-known neuromorphic chips. It features 128 million synapses and consumes less than 1.5W of power during standard use. [11]

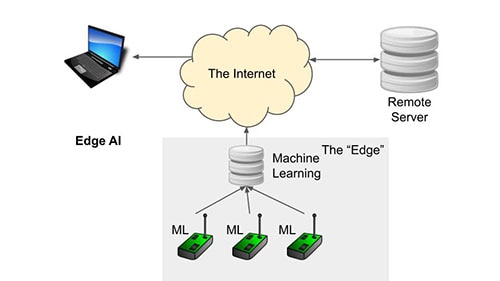

Today, neuromorphic computing systems are showing promising results across a wide range of tasks: object classification, speech and handwriting recognition, and real-time image and signal processing. [7]

Memristors are therefore finding niche success in embedded systems, particularly in AI edge devices, low-power neuromorphic chips, storage-class memory (between RAM and SSD), and embedded non-volatile memory (e.g., in microcontrollers). [1]

When paired with recurrent neural networks (RNN) and spiking neural networks (SNN), neuromorphic systems truly show their strength. In a benchmark study by Intel, Intel’s Loihi managed to show 1,000 to 10,000 times lower energy consumption and up to 100 times faster solution times for specific tasks. However, not all neural network types benefit equally. Most feed-forward neural networks, which dominate mainstream deep learning, brought little to no performance advantage on Loihi compared to regular GPUs, and in some cases, Loihi performed worse when compared with AI-accelerated GPUs. [10]

Reflection: Rethinking how we compute

As we push through the boundaries of AI and computing, it’s clear that traditional architectures are struggling to keep up, both in terms of performance and sustainability. Neuromorphic computing offers a fresh perspective, inspired by the most efficient processor we know, the human brain.

While still in its early stages of development and adoption, memristor-based hardware, even brain-like algorithms, which we will cover in part 2, shows promise. It’s not about copying biology perfectly but learning from it. And maybe, just maybe, the future of computing lies not in more power, more chips, and more data, but in smarter and more sustainable design.

Bibliography

[1] Lanza, Mario, Sebastian Pazos, Fernando Aguirre, Abu Sebastian, Manuel Le Gallo, Syed M Alam, Sumio Ikegawa, et al. “The Growing Memristor Industry.” Nature 640, no. 8059 (April 16, 2025): 613–22. https://doi.org/10.1038/s41586-025-08733-5.

[2] “The First Generation - CHM Revolution,” n.d. https://www.computerhistory.org/revolution/birth-of-the-computer/4/92.

[3] Thilakarathne, Sakya. “What Is the Structure of IAS and How It Operates?” Medium, June 9, 2023. https://medium.com/@sakyathilakarathne96/what-is-the-structure-of-ias-and-how-it-operates-87ac5cc73e2f.

[4] Rufai, Amina Mardiyyah. “Statistical Machine Translation: A Data Driven Translation Approach.” Medium, July 21, 2022. https://mardiyyah.medium.com/statistical-machine-translation-a-data-driven-translation-approach-8a5dc15ba057.

[5] Carbon Visuals. “The Case for Carbon Capture & Storage.” September 14, 2016. https://www.carbonvisuals.com/projects/wbcsd.

[6] Wang, Yuxin, Qiang Wang, Shaohuai Shi, Xin He, Zhenheng Tang, Kaiyong Zhao, and Xiaowen Chu. “Benchmarking the Performance and Power of AI Accelerators for AI Training.” arXiv (Cornell University), 2020. https://arxiv.org/abs/1909.06842v5.

[7] Wang, Shengbo, Lekai Song, Wenbin Chen, Guanyu Wang, En Hao, Cong Li, Yuhan Hu, et al. “Memristor‐Based Intelligent Human‐Like Neural Computing.” Advanced Electronic Materials 9, no. 1 (October 30, 2022). https://doi.org/10.1002/aelm.202200877.

[8] Chen, Li, Mei Er Pam, Sifan Li, and Kah-Wee Ang. “Ferroelectric memory based on two-dimensional materials for neuromorphic computing.” Neuromorphic Computing and Engineering 2, no. 2 (2022): 022001. https://doi.org/10.1088/2634-4386/ac57cb.